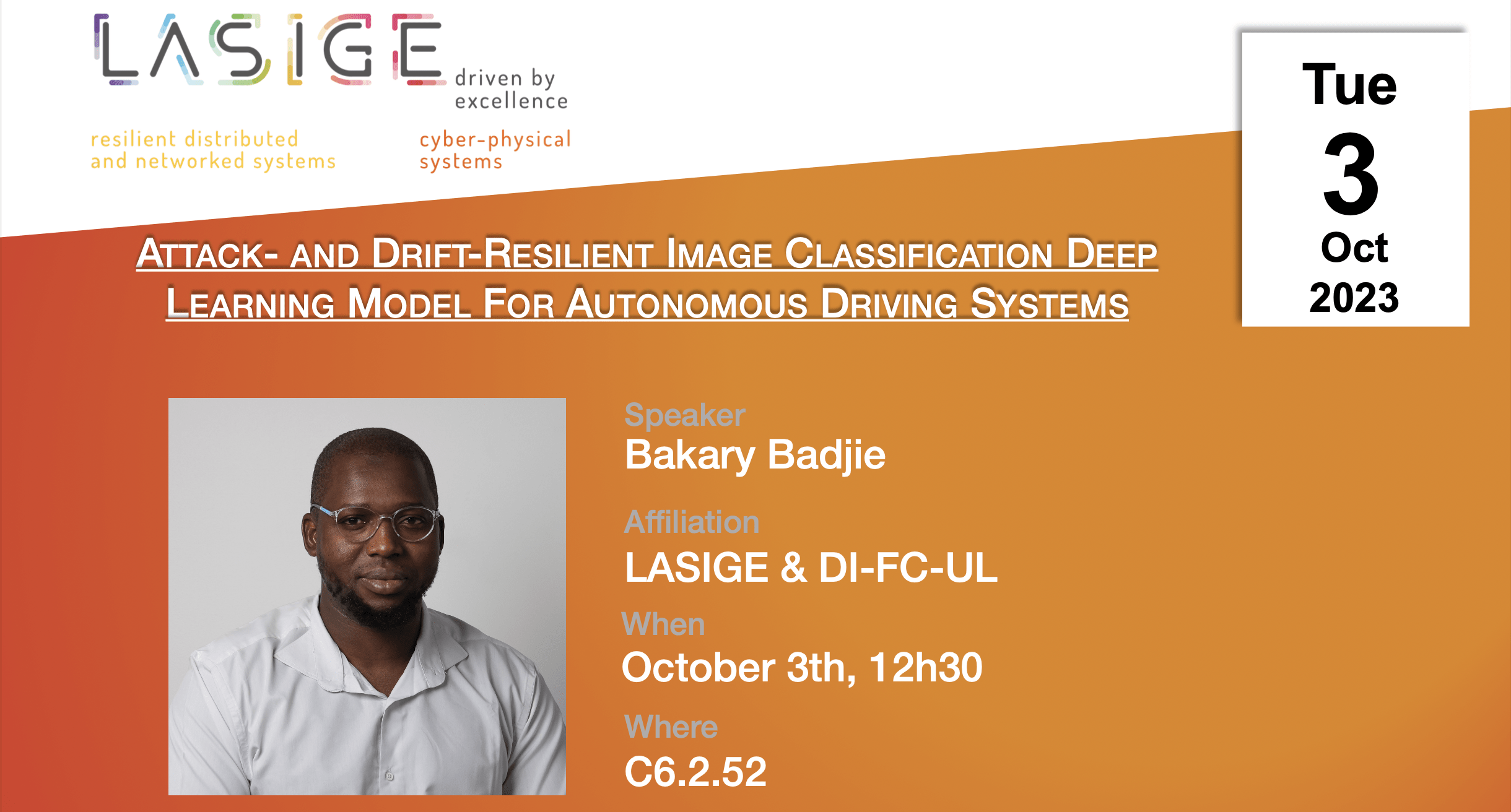

Speakers: Bakary Badjie (LASIGE, DI/FCUL)

Date: October 03, 2023, 12h30

Where: C6.2.52

Talk: Attack- and Drift-Resilient Image Classification Deep Learning Model For Autonomous Driving Systems

Abstract: Image Classification Machine Learning (ICML) models are widely used in Autonomous Driving Systems (ADSs), enabling them to analyze, interpret, and respond to visual information from their surroundings and make informed driving decisions. These models undergo training to identify, analyze, and classify dynamic and static objects in the driving environment, such as other vehicles, pedestrians, traffic signs, and road markings. However, these models are vulnerable to adversarial attacks and data drift, wherein an adversary deliberately injects perturbations into the input image or when the underlying distribution of input data changes over time, reducing the accuracy of the classification task and possibly compromising the safety of the ADS. The research objective of this proposal is to devise a A Resilient Expert-Based

Neural Network Predictive Framework for Tolerant Inference (ResilientNet) capable of robustifying an ICML model to adversarial attacks and data drift within the context of Autonomous Driving (AD). The proposed framework aims to robustly detect and classify adversarial, unseen and drifted inputs within the AD environment. This approach intends to overcome the limitations of the current state-of-the-art by improving the robustness and generalization capabilities of ICML models in AD to adversarial and drifted inputs. The expected outcome of this research is a novel approach that provides a practical and effective solution to the problem of adversarial attacks and data drift in AD. This contribution would aid in achieving reliable AD by offering a formal robustness guarantee for deploying ICML models in the AD environment. The overarching objective is to facilitate the deployment of reliable and safe Autonomous vehicles (AVs).